ARC-AGI-3 and the Un-Harnessed Frontier

A Benchmark, or a Signal?

ARC-AGI-3 launched on March 25, and most people will focus on the least interesting fact about it.

ARC-AGI-3 scores AI on action efficiency relative to a human baseline: a metric called RHAE. A perfect 100% means the system matches human efficiency across every game. Frontier models score well under 1%: they barely complete levels, and when they do, they burn far more actions than a human. The obvious reading is that AGI remains far away.

A more useful reading is this: ARC-AGI-3 may be one of the clearest early signals that cybersecurity is the next major frontier for AI, because it appears to measure the part of intelligence that still resists being trained into the model.

It's a strong claim, but ARC benchmarks have earned the right to be read that way. They matter less as scoreboards than as early measurements of capability shifts, before those shifts harden into product categories.

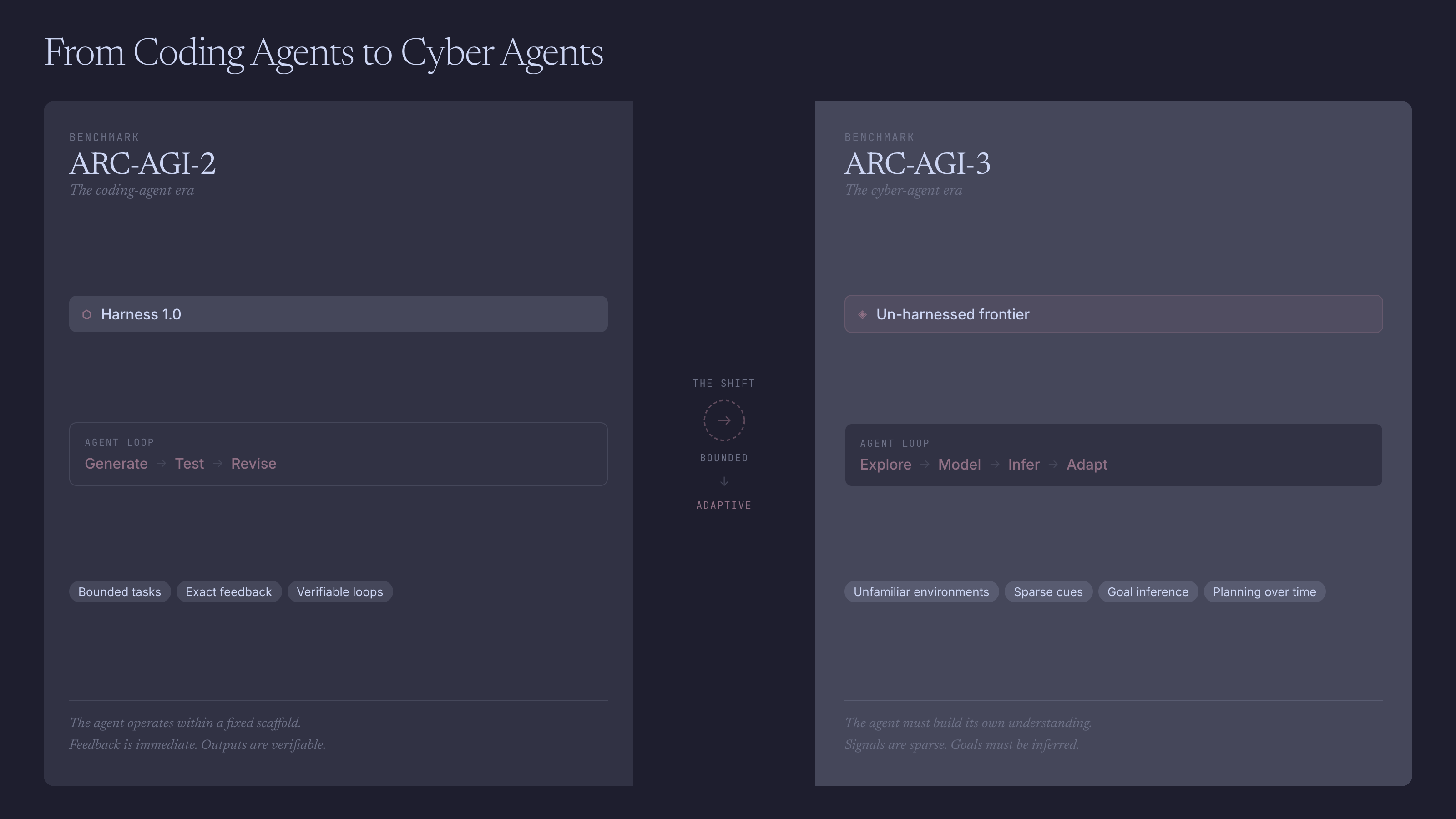

In hindsight, ARC-AGI-2 tracked the rise of test-time reasoning in verifiable domains. The ARC-AGI-3 paper says as much: ARC-AGI-1 and 2 signaled advances that later found product-market fit in coding tools like Claude Code and Codex.

That does not mean the benchmark caused coding agents. It means it measured the loop they later commercialized: generate, test, inspect, revise, repeat. Coding was the perfect proving ground because the environment gives exact feedback. The code compiles or it doesn't. The tests pass or they fail. The harness keeps looping.

Harness 1.0

The first coding-agent wave was never just a model story. It was a systems story. Tools, tests, retries, decomposition, and search turned strong models into useful operators. Then part of that harness got trained back into the model. Once labs had enough traces of tool use, corrections, and successful multi-step trajectories, behaviors that once required explicit orchestration started to look native.

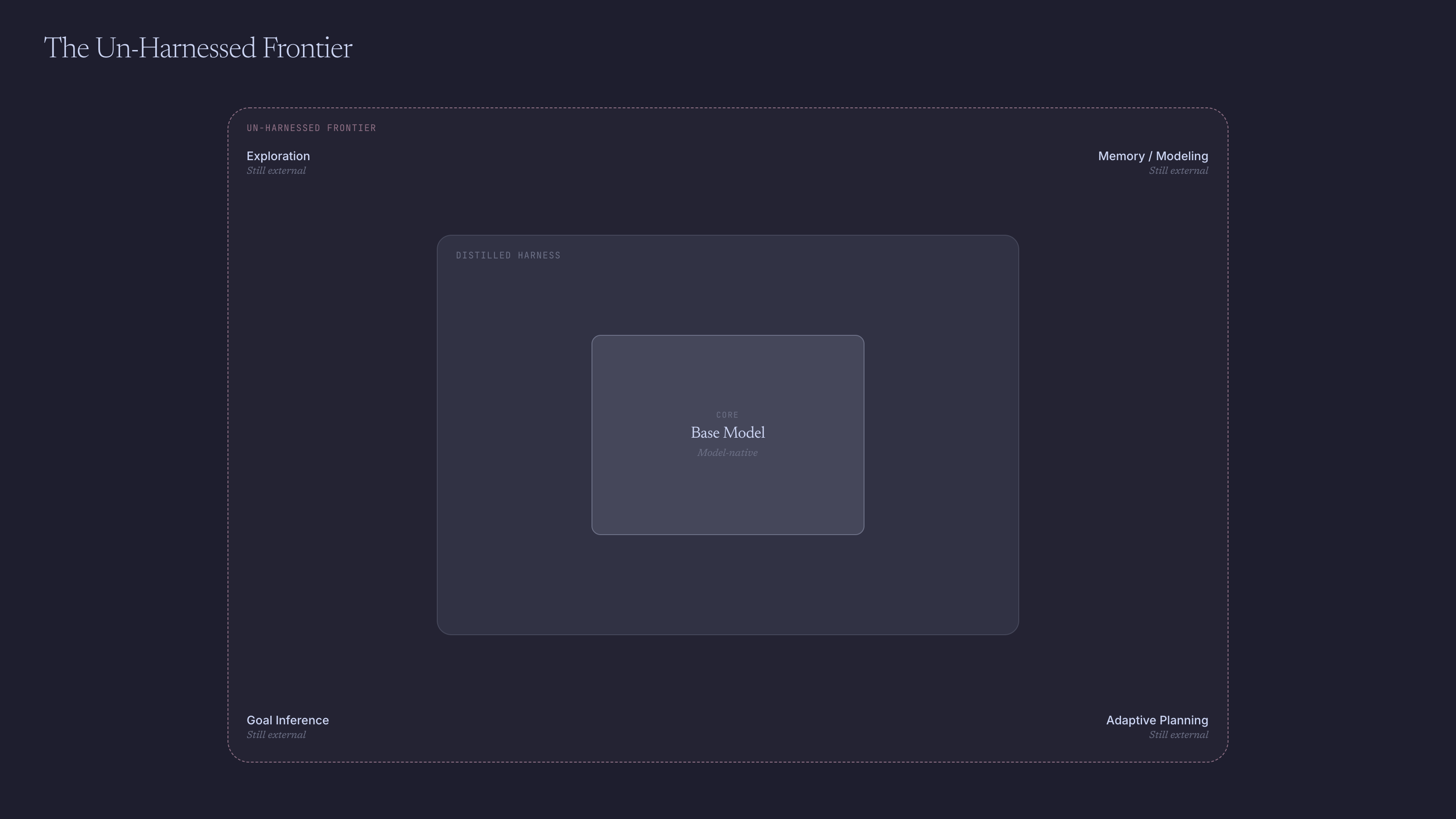

That is why ARC-AGI-3 matters. It seems to probe the layer that still resists compression into the model: the un-harnessed frontier.

The Un-Harnessed Frontier

The official paper frames ARC-AGI-3 as a shift from static reasoning to agentic intelligence. Its four core components are exploration, modeling, goal-setting, and planning and execution. The public docs use slightly different language and speak more explicitly about memory, but the idea is the same. The system is not told the objective. It has to explore before it understands, infer what matters from sparse cues, build a stateful picture of the environment, and adapt over time.

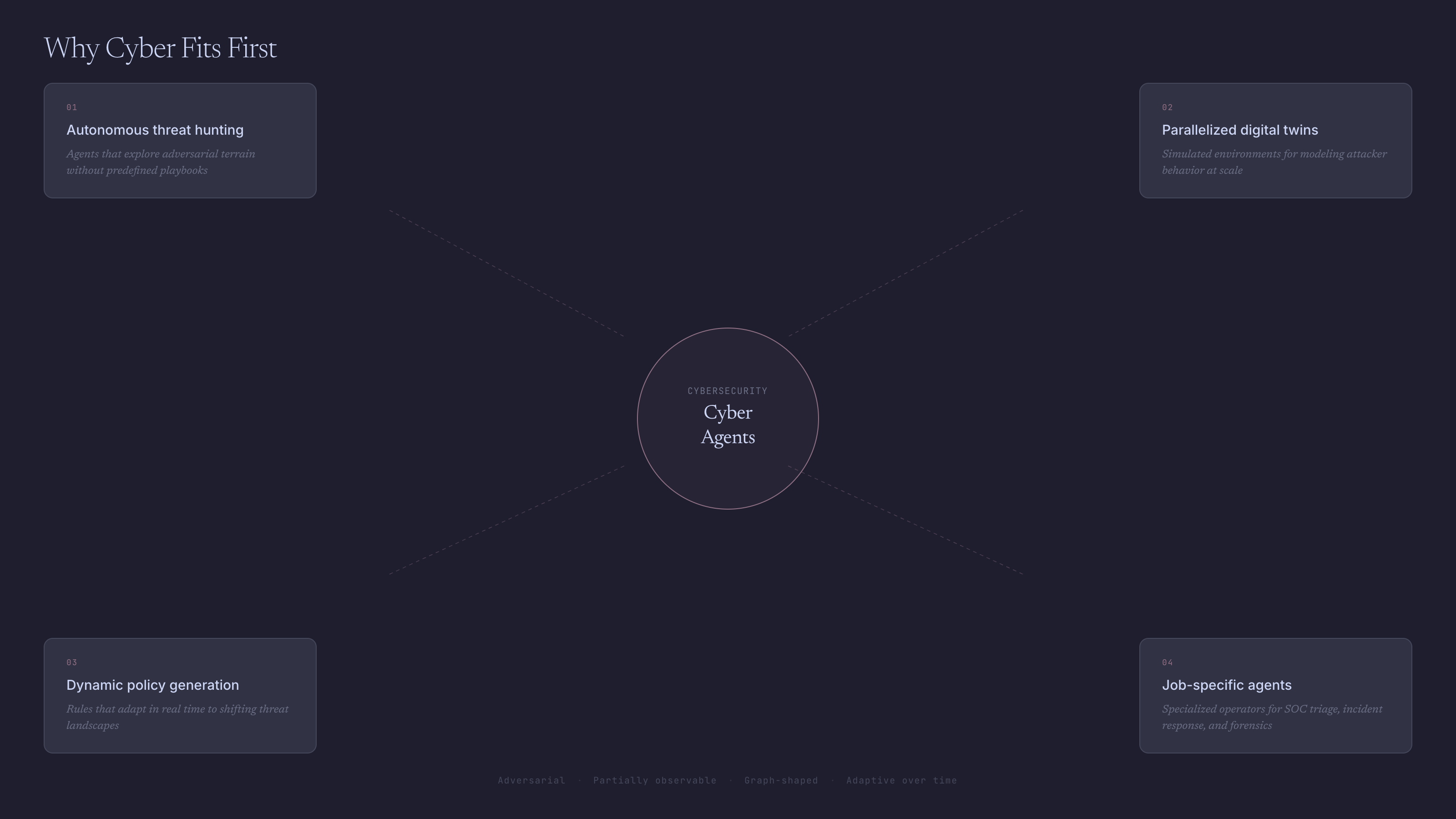

That is not just a harder puzzle. It is a different demand, and it maps cleanly onto many of the highest-leverage workflows in cybersecurity.

That is what makes the benchmark economically interesting. The next important AI markets will not be won by systems that simply answer questions better. They will be won by systems that can enter an unfamiliar environment, reduce uncertainty, and act efficiently. ARC-AGI-3 measures exactly that: not just whether the agent succeeds, but how it gets there.

Why Cyber Fits First

Cybersecurity is one of the clearest domains where that capability stack matters.

Threat hunting isn't classification. It's search: moving through noisy telemetry, forming a hypothesis, gathering evidence, revising the picture, and remembering what has already been ruled out. The objective is often unclear at the start. The hunter has to decide what matters before deciding what is true.

Digital twins push the same logic further. Instead of testing one attack path or one defensive policy at a time, you can spawn many searches across mirrored environments, simulate attacker movement, test controls, compare outcomes, and learn from the state space. That is not just automation. It is a different way to generate cyber understanding.

Policy generation is another example. Most security policy is still written as if the environment were static. Real environments are not. Assets change. Workflows change. Threats change. The interesting opportunity is not just to enforce policy, but to infer which policy should exist in the first place, then refine it as the environment evolves. What people call AI policy generation today is usually nowhere near that. In most cases, it just automates the old layer.

The future probably is not one general cyber copilot. It is a stack of job-specific agents: a threat hunter, an attack-path explorer, an incident triage agent, a remediation planner, a policy-hardening agent, and a purple-team simulator. The value comes from specialization plus coordination, not one chat box with broad access.

The Market Is Still One Layer Too Shallow

The current market is still aimed at the already-harnessed layer. Much of AI security today is still alert summarization, query generation, triage assistance, report drafting, natural-language interfaces over existing tools, and tightly bounded workflow agents. Useful products, yes. But they mostly target workflows where the objective is already known. They automate the known path.

You can see that in how the category talks about itself. Microsoft still frames Security Copilot as an assistive experience. Google is more ambitious and explicitly argues that traditional predefined playbooks break on novel threats; its Triage and Investigation Agent gets closer to the real loop by planning investigations, refining searches, and gathering evidence. But that, too, is mostly a clever Harness 1.0 system. CrowdStrike and SentinelOne are pushing further toward custom and proactive agents, but still inside governed, human-in-the-loop systems.

That is real progress. It is also a tell. The market is moving toward bounded agency, not yet toward the un-harnessed frontier.

The gap is not only technical. It is talent. Most strong AI talent does not understand cyber deeply. Most strong cyber talent is not building at the frontier of models, agents, and systems design. That intersection is still rare, which is exactly why it may become one of the biggest early opportunities in the next AI wave.

State the claim carefully, but plainly: ARC-AGI-3 does not prove that autonomous cyber systems are here. It does suggest that the next important capability stack is exploration, modeling, goal inference, memory, and adaptive planning in unfamiliar environments. Cybersecurity is where that stack is most likely to become economically important first.

If that is right, the next wave in cyber will not be defined by better copilots. It will be defined by teams building for the un-harnessed frontier. And that matters for more than startup formation. Over the last year, the easier adoption curve has often belonged to attackers. This may be one of the first shifts that gives defenders, and the people who care about stability, resilience, and prosperity, a chance to regain ground.

If you are researching or building at that intersection, frontier AI and cybersecurity, especially around threat hunting, digital twins, policy generation, or job-specific agents, I would love to talk.